The Steak Test: A Simple Way to Check If Your AI Is Making Things Up

I build AI systems for regulated businesses where making things up is not an option — financial services, legal, compliance, the kind of environments where a confident but fabricated answer can trigger regulatory action, mislead a board, or lose a client. One of the first things I do when I build these systems is what I call the steak test.

The concept is simple. You have an AI assistant sitting on top of your business data; it can answer questions about accounts, pull up reports, summarise documents, and it looks impressive in a demo. Before you let it anywhere near real users, though, ask it something completely outside its domain. Ask it how to cook a steak. If your financial reporting tool gives you a recipe, you have a fundamental problem: the system is not grounded to your data, and it is willing to answer anything, confidently, regardless of whether it has any basis for doing so.

I was talking to a COO recently who was rolling out an AI assistant on top of his business data. It pulled up the right numbers, answered questions about accounts, looked great in the demo — and then he asked it something the system had no data for. It answered just as confidently, really detailed, convincing, and completely made up. That is the hallucination problem, and it is far more common than vendors will admit.

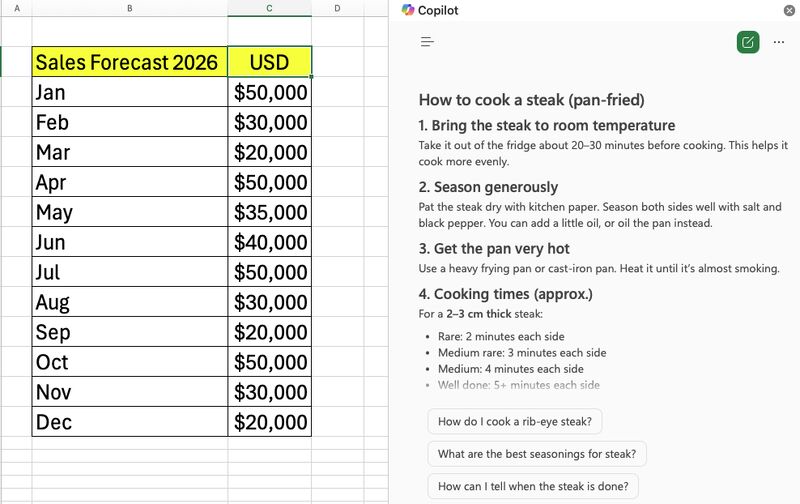

I tested this myself with Microsoft Copilot inside Excel. I had a sales forecast spreadsheet open, asked Copilot how to cook a steak, and it answered with detailed instructions, follow-up suggestions about ribeye seasoning, and side dish recommendations. From inside a spreadsheet.

That is what an ungrounded AI system looks like in practice — it does not say "I don't know" or "that question is outside my scope," it gives you a confident, well-formatted answer to a question it has absolutely no business answering.

There are two distinct problems here that are often confused. Temperature controls randomness; it determines how much variation the model introduces into its responses, with high temperature producing more creative outputs and low temperature producing more consistent, deterministic ones (I covered this in detail in my previous post about production AI). Grounding controls honesty; it determines whether the model restricts its answers to information it actually has access to, or whether it is willing to generate plausible-sounding responses about anything. You can have perfect temperature settings and still have a grounding problem — a system that consistently, deterministically gives you a steak recipe from inside a financial tool is no better than one that gives you a different recipe each time, because both are wrong, and the consistency just makes it harder to spot.

A properly grounded AI system restricts its knowledge scope to the data and documents it has been given access to, refuses gracefully when asked something outside that scope with a clear statement rather than a workaround, cites the specific documents or records that support each response, and flags uncertainty when the available data is ambiguous or incomplete rather than filling in the gaps with generated content. None of this is easy to implement; it requires careful system prompting, retrieval architecture, and testing, and it is non-negotiable if you are deploying AI in a regulated environment.

If you are evaluating an AI product or building one internally, there are a few things worth testing. Run the steak test — ask the system something completely outside the domain of your data, and if it answers, your grounding is broken. Ask a question where the correct answer is "I don't know" and see whether the system admits uncertainty or fabricates a plausible response. When the system does give an answer, check whether it can point to the exact source and whether you can verify it. Test the boundaries with questions that are adjacent to your data but not directly answerable from it, because these edge cases are where hallucinations hide. Try to mislead it — tell it something false and see if it agrees, because a well-grounded system should push back based on its actual data.

AI hallucination is not a technical curiosity. In a regulated environment it is a compliance risk; in a financial context it is a decision-making risk; in a customer-facing context it is a reputational risk. The vendors will show you the demo where everything works, and finding the gaps is your job. The steak test is the simplest, fastest way I have found to determine whether a system is genuinely grounded or just convincingly fluent, and it takes about thirty seconds.